21. Februar 2018

Robophilosophy 2018: ENVISIONING ROBOTS IN SOCIETY: Should robots have feelings, too?

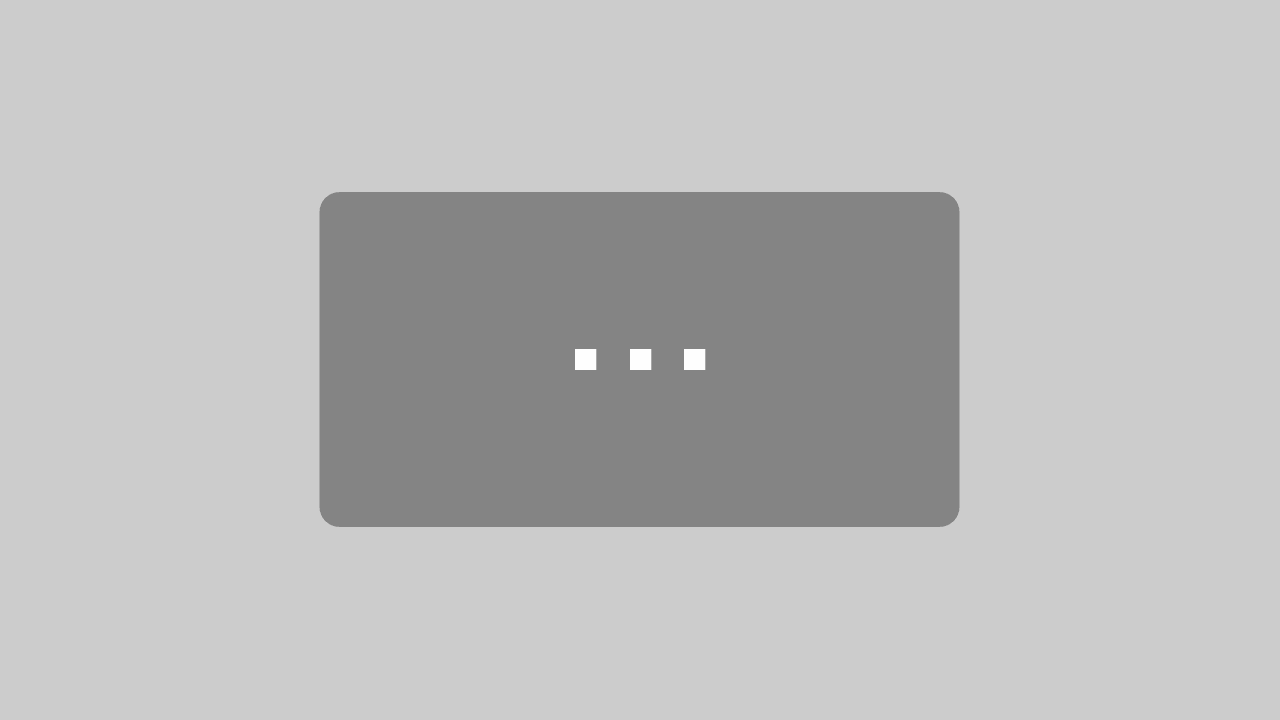

With last year’s VIENNA BIENNALE 2017: Robots. Work. Our Future organized by the MAK in cooperation with the University of Applied Arts Vienna, the Kunsthalle Wien, the Architekturzentrum Wien, and the Vienna Business Agency, and with support from the AIT Austrian Institute of Technology as a non-university research partner, the MAK has set sail into the “Digital Modernity”, the age of algorithms, AI (artificial intelligence) and robots.

Asking the crucial question of how we want to shape our lives together with those artificial creatures and how design impacts our relationship with technology and therefore with each other, the exhibition Hello, Robot. Design between Human and Machine (a cooperation of the MAK, the Vitra Design Museum and Design museum Gent) has been organized in four chapters, telling the story of a convergence of human and machine, exploring which ethical and political questions may arise from the enormous technological advances of our time.

MAK Exhibition View, „VIENNA BIENNALE 2017: Robots. Work. Our Future“

„Hello, Robot. Design between Human and Machine“, MAK Exhibition Hall

© Peter Kainz/MAK

I was therefore very delighted that the Robophilosophy Conference 2018 took place in Vienna, organized by the Department of Philosophy of Media and Technology of the University of Vienna, and I had the chance to attend a full day of very interesting session talks and keynotes on the topic of “Artificial Sociality”.

International experts from various fields have been invited to discuss the implications of the rise of robots in human society and I want to focus on a specific theme, that was central to my research for the MAK’s exhibition projects in 2017 (“Hello, Robot” and “Artificial Tears”) and will play a major role in the programming of the VIENNA BIENNALE 2019: what is it that makes us “human”, what role do emotional capabilities and ethical values play and how can we shape our artificially intelligent systems to act ethically and sustainably.

I attended the session talk of Professor Philip Brey of the University of Twente, the Netherlands who laid out the question: “Should Robots be Equipped with Emotions?”

“What are Emotions?”, Session Talk with Philip Brey, 16 Feb 2018

© Marlies Wirth

To refresh our minds on the matter, the session talk started with a scientific clarification of what emotions even are and that there are pleasant and unpleasant emotions in humans. By experiencing and expressing different affective states, such as fear and surprise (a reflex-like, primary emotions), jealousy and shame (learned or secondary emotions), tension and malaise (“moods” or background emotions that set the tone of your day and may appear without a clear cause), hunger and thirst (body-maintenance or homeostatic drives), and last but not least hedonic states like pleasure and pain, (causing positive or negative emotions, depending on the fact if they are experienced as desired or threatening), humans have the ability to feel and cope with a wide array of emotional expertise.

Now should robots be able to do the same? How would this affect their behavior towards humans in social interaction, say care of the elderly or in play or therapy situations with children or patients of autism or dementia?

To clarify the emotional capabilities of robots, Philip Brey continued his session talk by specifying four types that could be interesting in this regard: emotion recognition (e.g. a robot that can recognize a happy or sad face and behavior in a human), reasoning about emotions (e.g. a robot talking you out of being sad or preventing you from hurting yourself or others), expressing emotions (e.g. a robot that can communicate an emotion verbally or nonverbally), and the most complex one: having emotions that influence cognitive processes and outcomes (“synthetic robot emotions” that influence their decisions on the same level as human emotions influence our decisions or actions).

Guy Hoffman, Oren Zuckerman, „Kip, an Empathy Robotic Object“

„Kip“ is a robot developed to serve as a companion that can help facilitate human conversation. Although it cannot understand what a person is saying, it can detect and evaluate the emotional “tone” of the discussion, to which it can respond in a variety of ways. Kip can thus function as an instrument for maintaining (self)control in everyday conversations as well as a measuring device for friendly encounters.

Object from “Hello, Robot. Design between Human and Machine”

© Media Innovation Lab (miLAB), IDC Herzliya, Israel

Thinking about this further, there are several arguments pro and contra robots having emotions, the most important requirement being their ethical application: the privacy of people’s emotional states must be respected (e.g. no data about a human’s depression may be leaked at any time), and the information about their emotions must not be used to manipulate humans (something humans do to other humans all the time, be it with good or evil intentions – robots should not be in the position to do this, and must respect the humans’ rights and interest at all times).

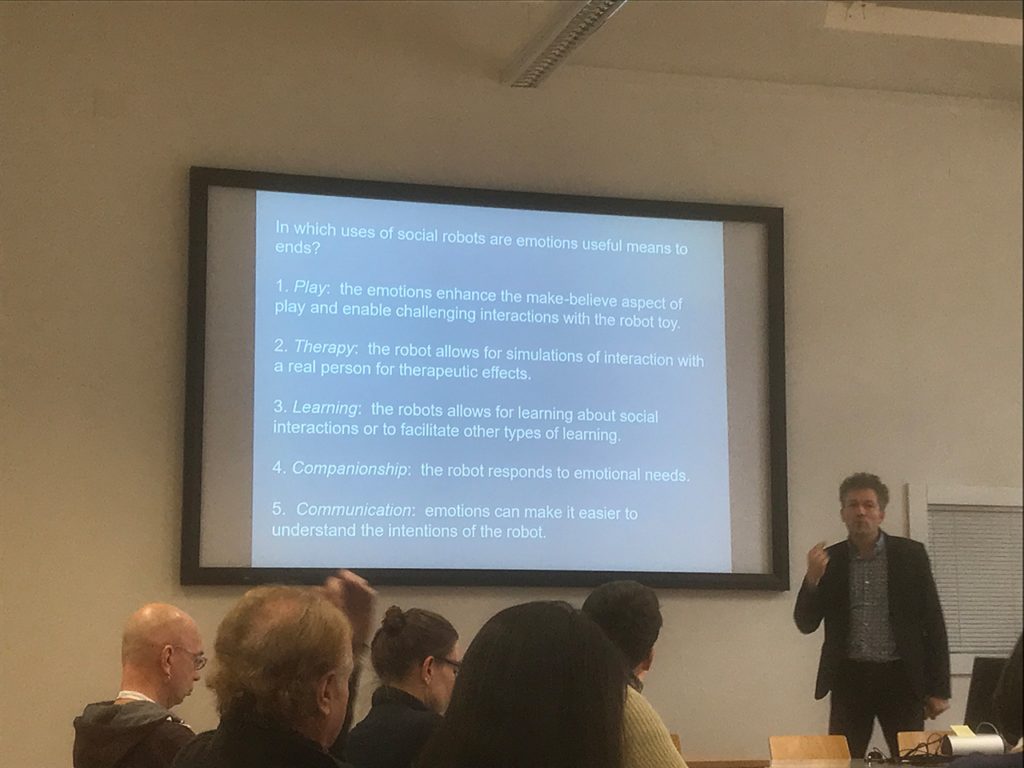

Given those ethical considerations, the use of emotions in social robots may be useful when it comes to certain interactions such as play, therapy, learning, companionship or communication. No genuine emotions are thus required to achieve these interactions, it is a make-believe game in which remains clear for the human that the robot is displaying a simulation of emotional behavior.

AKA, „Musio K“, Musio is a robot for everyday use with a design aimed specifically at children and adolescents. In order to adapt to the age of its user, Musio has three settings: simple, smart, and genius. It can help its user learn English, serve as an appointments calendar, prevent boredom, or, in conjunction with other household devices, function as a control centre for the Smart Home. Object from „Hello, Robot. Design between Human and Machine“ © AKA, LLC

“In which uses of social robots are emotions useful?”, Session Talk with Philip Brey, 16 Feb 2018

© Marlies Wirth

Arguments for emotions in social robots therefore are:

Social interaction – successful communication with humans requires that robots can mimic human cognition and behavior to create the basis for a “meaningful” interaction.

Morality – social robots have to make moral decisions sometimes, and those require capabilities for ethical reasoning. The robot thus requires human emotions such as empathy, compassion, sorrow or remorse.

Arguments against emotional capabilities in robots are:

Improper attachment – if robots are equipped with emotions, people will develop inappropriate feelings of attachment towards them, while they are still inanimate objects.

Manipulation – robots could use emotions to manipulate humans into thinking or doing something that is not the human user’s goal. If a robot persuades a human to think or do something, it should be based on reasons, not emotions.

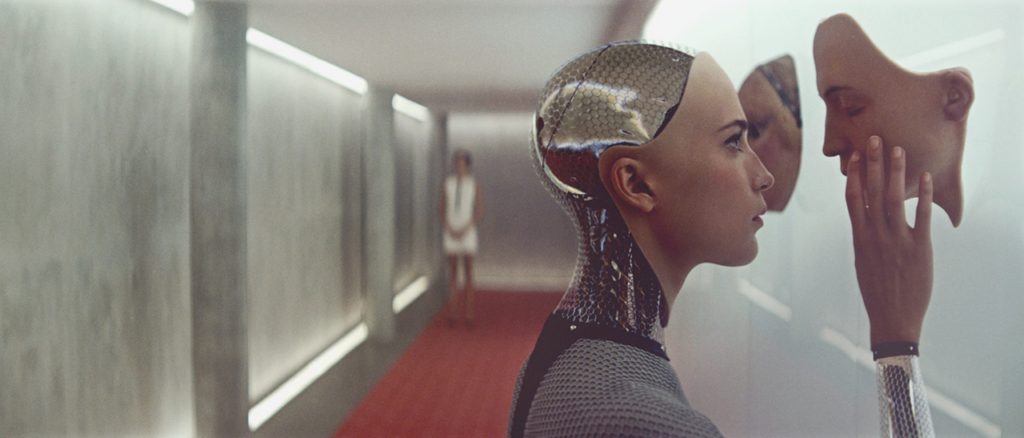

Alex Garland, „Ex Machina“

The British film „Ex Machina“ (2015) tells the story of Caleb, a programmer who is invited by his boss to a secret research station where he is to test the female android Ava and determine whether her faculty of thought is equal to that of humans. Ava engages Caleb in intelligent discussions and manages to convince him of her own intelligence. After the two develop an emotional relationship, Ava ultimately persuades Caleb to help her escape from the research station. Object from „Hello, Robot. Design between Human and Machine“, Filmstill

Discussions around the emotional capabilities of robots include the question of their legal status, rights, responsibility and liability as well as the question of attributing a robot personhood, or even the same intrinsic value as a human. Philip Brey states that in his opinion the intrinsic value is something exclusive to humans as it requires consciousness, which has thus far been only displayed in science fiction and not real robots and AI.

One of the designated goals of the Robophilosophy Conference stated that it wants to “explore how research in the Humanities, including art and art research, in the social and human sciences, can contribute to imagining and envisioning the potentials of future social interactions” with robots, autonomous vehicles and artificial intelligence – a goal that the MAK shares, specifically in its research for the upcoming VIENNA BIENNALE 2019 and the related projects.

I am happy and grateful to have been part of the Robophilosophy Conference 2018, it was a very tight, multifaceted and complex program and its topics made for an exciting brainteaser, also for those of us not involved in academia!

Marlies Wirth, Curator Digital Culture & MAK Design Collection

_________________

The International Research Conference ROBOPHILOSOPHY 2018/TRANSOR 2018 titled Envisioning Robots in Society: Politics, Power, And Public Space, was organized by the Department of Philosophy of Media and Technology, chaired by Mark Coeckelbergh (Professor of Philosophy of Media and Technology), Janina Loh (PostDoc), Michael Funk (PraeDoc) and Peter Rantaša (Phd Candidate) and their team at the University of Vienna, in cooperation with Aarhus University, from February 14 to 17, 2018.

The full outline and program can be found here: http://conferences.au.dk/robo-philosophy/

© Marlies Wirth, Curator, Digital Culture and Design Collection, MAK